Past Projects

Here follows a choice of some of my past projects, mostly investigations conducted during my time at SRF, and some interactive news applications.

Here’s what we found in the Collection #1-5 password leaks

March 2019.

Direct link.

This was an investigation deep into the heart of the “Collection #1-5” password leaks that appeared in the web in early 2019. We showed that more than 3 million Swiss email addresses and – more disquietingly – over 20’000 email addresses of Swiss authorities and providers of critical infrastructure appear in the leak.

Aside the usual broadcast and online channels, we also released a short Youtube video for a younger audience that explains the dangers of using a weak password. For demonstration purposes, I gained access to the Instagram account of our host, Lena, within a couple of hours.

In this project, I used a so-called “big data technology”, Spark, for the first time. While we at SRF Data usually publish the source code for our data processing, I decided against doing so in this case. Instead, I wrote a blog post that explains the process and helps other journalist tackling similar “big data” problems.

Deep Fakes – indistinguishable from magic

August 2018.

Any sufficiently advanced technology is indistinguishable from magic.

- Arthur C. Clarke

When so-called “deep fakes” popped up in late December on Reddit, they caused quite a stir. At SRF Data, about half a year later, we wanted to explain the technology with self-made examples. Without external help, we created a series of deep fake experiments ourselves, using an open-source AI framework. To our surprise, we could produce an astonishingly good fake, replacing the face of one of our most prominent news anchorwomen.

In a lengthy article, we then went into the nitty-gritty of how the technology behind deep fakes work – publishing probably the nerdiest article about deep fakes in mass media up to that date.

To explain the subject, we only used animated GIFs like the one above, and some demonstration videos.

Aside the usual broadcast and online channels, we also released a short Youtube video for a younger audience that explains what deep fakes are – and what dangers there could be.

The dark chamber of the Swiss prosecution system

August 2018.

If a Swiss prosecutor wants to put somebody in custody during an investigation or needs to employ surveillance methods, he or she needs to get the okay of a so-called “Zwangsmassnahmengericht”, a special court responsible for these “compulsory measures” that need to be brought into force only in special circumstances.

These special courts have been put into action in the year 2011. Since then, they have assessed countless applications by state prosecutors, but have remained largely intransparent and secret – an actual “dark chamber”.

For the first time, my team has profoundly investigated the (often hidden) statistics behind the secret court orders. The number that resulted in the end is rather disquieting: 97 percent. That’s the ratio of applications that get accepted. In other words, state prosecutors almost always get a “thumbs up” for their invasive actions. In that sense, these rather new courts don’t really seem to be a barrier for law enforcement.

The research has stirred up quite some political uproar – many parliamentarians, for example, have assured us that they’d like to go over legislation and make some adjustments. Also, experts have came up with the idea of an attorney for human rights that should be heard during the decision making process.

The Swiss criminal justice system employs an intransparent algorithm to assess its inmates

June 2018

June 2018.

In this research we could show that the Swiss criminal justice system uses a simple, but intransparent algorithm to categorize inmates into three risk classes: A, B and C. Especially C-class inmates have to undergo more profound screening and are under increased scrutiny, potentially impacting their right to parole. Our research showed that the algorithm, when applied in different cantons (= Swiss provinces), produced very different results: Sometimes, C-class inmates would be the majority, sometimes A-class, etc. The officials didn’t have an explanation for this and wanted to look into it

Also, while the algorithm had previously been publicly known, its weights and exact mechanism had been a black box. Under our journalistic pressure the responsible authority finally released the inner workings of the system for public scrutiny.

The Swiss police use a dubious risk assessment software

April 2018

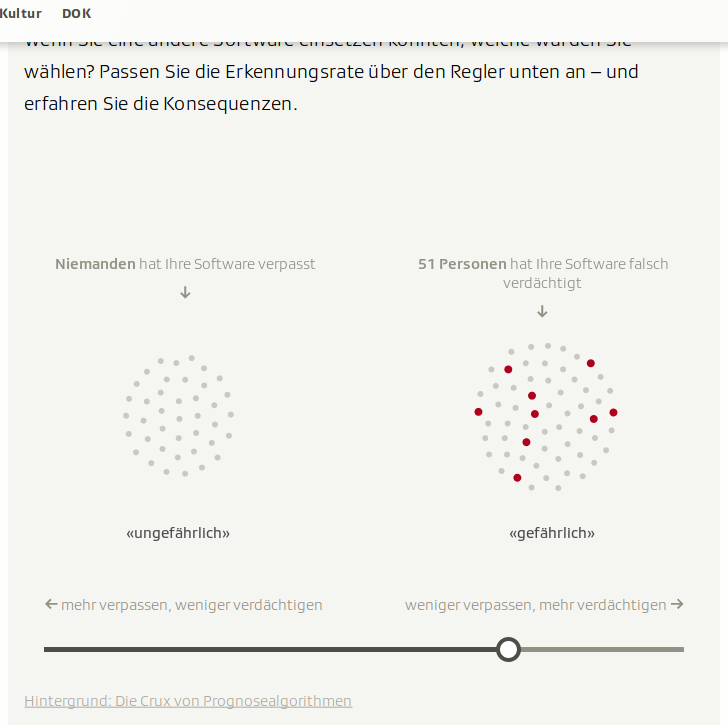

In this investigation, I could show that the Swiss police uses a dubious software to assess the risk of individuals of being a “potential danger for the public”. While the use of the software had been publicly known until that point, I exclusively dug out some studies that show that the software actually performs not very well. In fact, out of three people it deems “potentially dangerous”, two actually weren’t.

The Swiss police and other state actors use more and more automated & algorithmic systems that take the burden of hard decisions off of them. I think that now is the right time to lay a special focus on such systems and investigate their (hidden) biases.

This story came together with an interactive simulation that explained the trade-off between false positives and false negatives.

Pendlerland – A personalized view on Swiss commuting patterns

May 2017

For some, Switzerland is one big city with high-speed trains and highways functioning as tram and bus lines – people commute from Bern to Zurich like they would from a district to another. Indeed, Switzerland has some of the highest commuter rates in the world, and there’s a plethora of statistics available.

At SRF Data, we took these data sets and tried to find a personal approach to it, besides just reporting numbers with charts. We came up with an interactive and adaptive article that changes its content and layout based on what the reader would fill in as his commuting journey.

Based on this, the reader is presented with a fully personalized view on the topic of commuting.

I did not only preprocess and analyze the raw data with R, I also came up with some pretty neat GIF graphics that add some eye candy to the article.

These are basically a sequence of ggplot’s `geom_point` graphics.

My colleagues from swissinfo.ch translated the article into Russian, Chinese, Spanish and Japanese.

Identifying a large number of fake followers on Instagram

October 2017

Being a so-called influencer is the dream job of the moment for a lot of young people. Getting a wealth of free products, or even bare cash, in exchange for an Instagram post is enticing, and the advertising industry seems to have discovered a new, effective form of approaching target groups.

However, accusations started appearing that the followers of many influencers are, in fact, fake. Could these accusations be true? There has never been a systematic study on the subject – nobody, neither in or outside of Switzerland, has ever tried to thoroughly quantify the fake follower problem on Instagram.

So that’s what we at SRF Data did. We trained a machine learning model to automatically classify 7 million Instagram accounts regarding their “fakeness”. By doing so, we found out that roughly a third of these accounts, following 115 Swiss influencers, are indeed fake.

Some influencers had more than 50% fake followers, which raises questions about the integrity and authenticity of these follower bases. Consequently, the publication caused quite a stir in the Influencer economy.

If you want to know more about the methodology behind this project, look at this making of or at the original source code behind the analysis.

Mapping urban sprawl in Switzerland

December 2016

Urban sprawl is one of Switzerland’s (few) biggest environmental problems. Since 1985, the population has grown by more than 30 percent, and since then, land of the size of the Lake Geneva has been plastered with concrete.

In our interactive explainer «Bauland» we present facts and figures regarding urban sprawl, but the core element is a feature where the reader can choose its own municipality and then switch between different years to see how urban sprawl has changed its face. This visualization is based on a very detailed Swiss statistic, where every hectare (10k square meters) is surveyed every couple years and classified into categories forest (dark green), farmland (bright green), settlement (dark grey) and unproductive area such as glaciers (bright grey).

The project was nominated as one of three projects in the prestigious Swiss Press Online Award 2017.

Here’s how 670’000 people speak German

August 2016

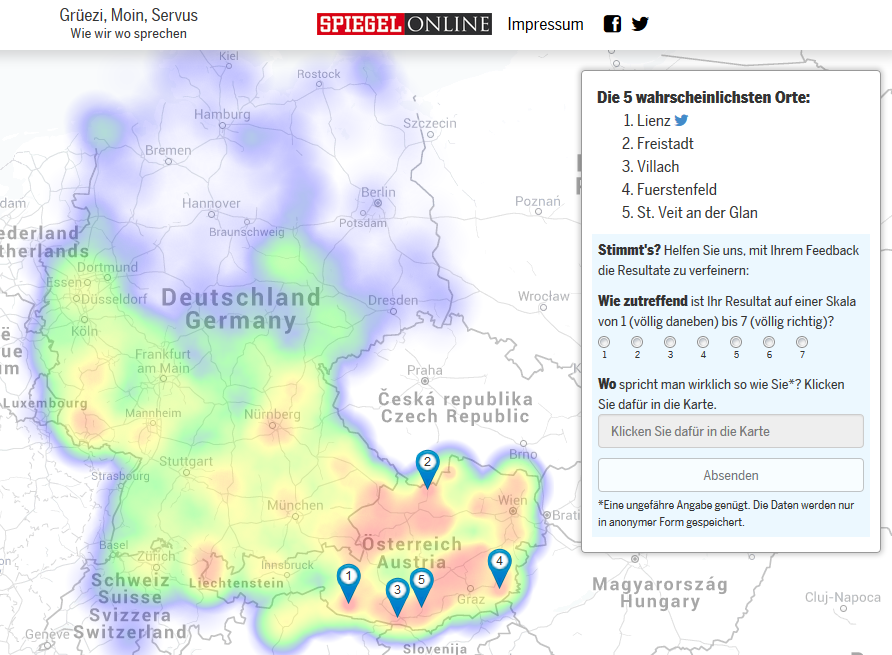

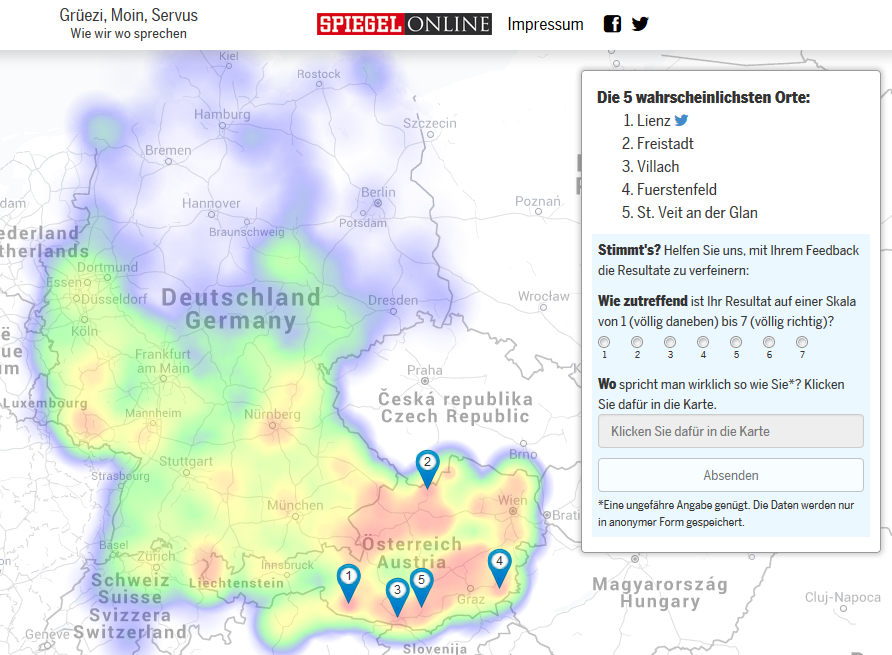

In April 2015, when I was still working at Tages-Anzeiger, we published a hugely successful dialect quiz. After a week or two, we had over 2 million unique visitors, also thanks to the co-publication by Spiegel Online.

The quiz predicted someone's most likely cities of residence, and users could give feedback on that (see the form on the right side above).

Now comes the thing that stunned me the most: Over a third of all visitors actually filled that form out – we ended up with over 670’000 responses, i.e. people’s answers to the 25 questions and their self-proclaimed location of residence as WGS84 coordinates.

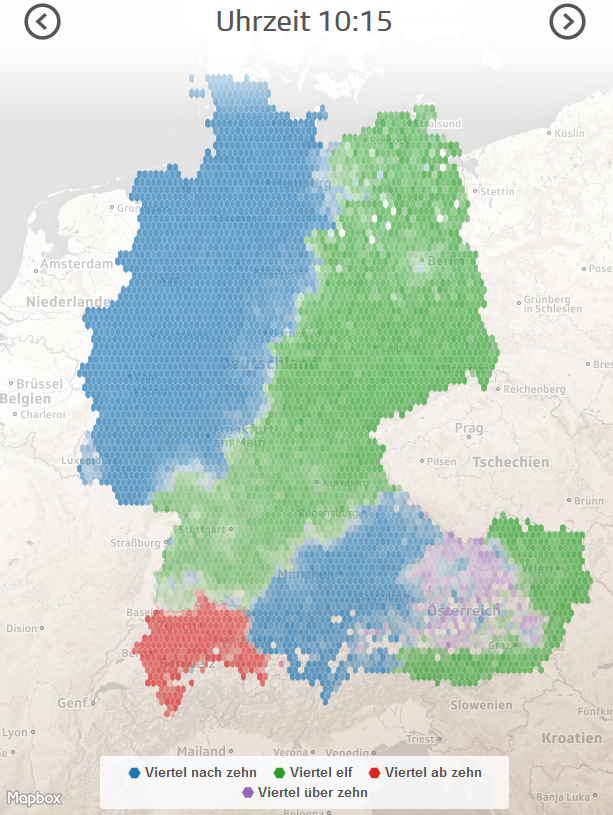

In the R statistical environment, I summarized these point data to hexagons and exported them to GeoJSON/TopoJSON. Now we had 25 different maps (for the 25 different initial questions, better: words) that showed the regional distribution of answers (better: pronunciations), based on the biggest online dialect survey ever conducted in Europe. We published these maps on the online presence of the Swiss Public Broadcast (SRF) as well as on tagesanzeiger.ch and spiegel.de.

Vested interests of Swiss universities

April 2016.

In our largest data-driven research so far we examined the vested interests of Swiss universities. We researched, among other things, more than 1000 secondary employments of professors and more than 300 sponsored professorships. The investigation resulted in publications in dozens of different radio and television programs of the Swiss Public Broadcast SRF.

The research launched a national debate on the independence of the Swiss Universities. Over the course of the following year, some universities have already implemented systems for more transparency. In the meantime, we were transparent ourselves and published our curated and tediously preprocessed database on GitHub.

The project was awarded the prestigious “Prix Média Newcomer” of the Swiss Academies of the Arts and Sciences.

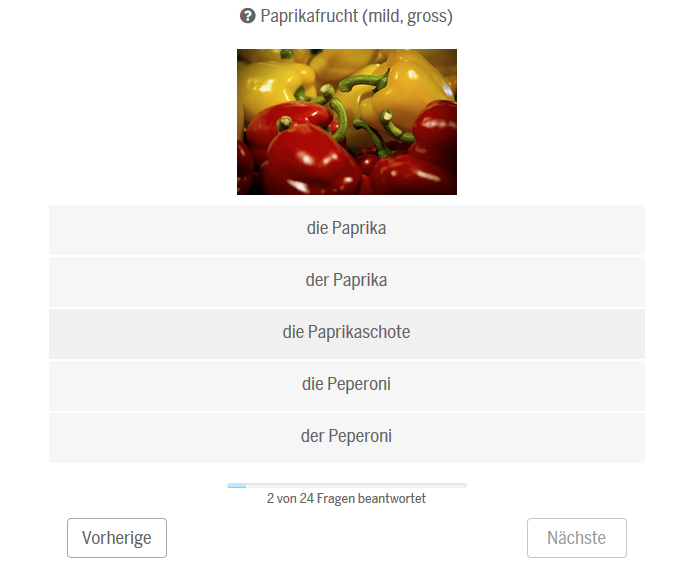

«Sprachatlas» – an interactive dialect quiz for the German-speaking region

April 2015.

Inspired by the hugely successful New York Times dialect quiz, our team at Tages-Anzeiger, consisting of me and Marc Brupbacher, teamed up with language scientist Dr. Adrian Leemann (back then conducting research at the University of Zurich) to launch a similar application for the German-speaking region. Dr. Leemann provided the data and I was responsible for coding the frontend. I mainly used AngularJS, and LeafletJS for the map.

Our quiz was very similar to the NYT one: The user has to answer 25 questions on how certain words are spoken out in his region (I was baffled to learn that there exist more than 20 different ways of saying that you have the hiccups).

Based on the answer, a probabilistic model calculated the most likely cities of residence, and based on that, a form of a heatmap shows the likely region of residence.

On the result screen, the user was also able to rate his personal prediction and to share the results via Twitter. The answers from the feedback form are now an invaluable data basis for new research conducted by Dr. Leemann.

Fortunately, we found a worthy partner in Spiegel Online for publishing the project. This allowed us to reach a huge audience: In the first 2-3 days, we had over 1.5 million unique visitors. To me, this project is a very good example on how journalists and scientists can launch awesome applications together and profit from each other – journalists get interesting and new data sets and scientists have a platform to publish their research which would otherwise remain in the academic domain.

Switzerland’s dual-use exports

May 2015 to January 2018

Dual-use goods are goods that can be used for civil and military purposes. One example for these kinds of goods are so-called IMSI catchers, devices used to surveil mobile phones. In Switzerland, these goods are governed with a special legislation – unlike in other countries, where they are looked at as conventional arms exports. At SRF Data, we took the effort to parse and visualize the recently released data from the State Secretariat for Economic Affairs SECO.

The interactive visualization allows the reader to dig into the highly detailed dual-use exports data.

Because our data processing workflow is fully reproducible, we can re-publish the vis again and again as soon as new data are available. One of the cool things about this project is that it can be updated every year once the SECO releases new data. We already did that in 2015, 2016, 2017 and 2018. The data and methodology are freely available on GitHub, as with other stories published by SRF Data.